1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

|

%global _empty_manifest_terminate_build 0

Name: python-mgzip

Version: 0.2.1

Release: 1

Summary: A multi-threading implementation of Python gzip module

License: MIT

URL: https://github.com/vinlyx/mgzip

Source0: https://mirrors.nju.edu.cn/pypi/web/packages/80/31/0f83d46a92aae1a39d6b78c22def34c6791de2a300f019695d6aee3e4e5a/mgzip-0.2.1.tar.gz

BuildArch: noarch

%description

# mgzip

A multi-threading implement of Python gzip module

Using a block indexed GZIP file format to enable compress and decompress in parallel. This implement use 'FEXTRA' to record the index of compressed member, which is defined in offical GZIP file format specification version 4.3, so it is fully compatible with normal GZIP implement.

This module is **~25X** faster for compression and **~7X** faster for decompression (limited by IO and Python implementation) with a *24 CPUs* computer.

***In theoretical, compression and decompression acceleration should be linear according to the CPU cores. In fact, the performance is limited by IO and program language implementation.***

## Usage

Use same method as gzip module

```python

import mgzip

s = "a big string..."

## Use 8 threads to compress.

## None or 0 means using all CPUs (default)

## Compression block size is set to 200MB

with mgzip.open("test.txt.gz", "wt", thread=8, blocksize=2*10**8) as fw:

fw.write(s)

with mgzip.open("test.txt.gz", "rt", thread=8) as fr:

assert fr.read(len(s)) == s

```

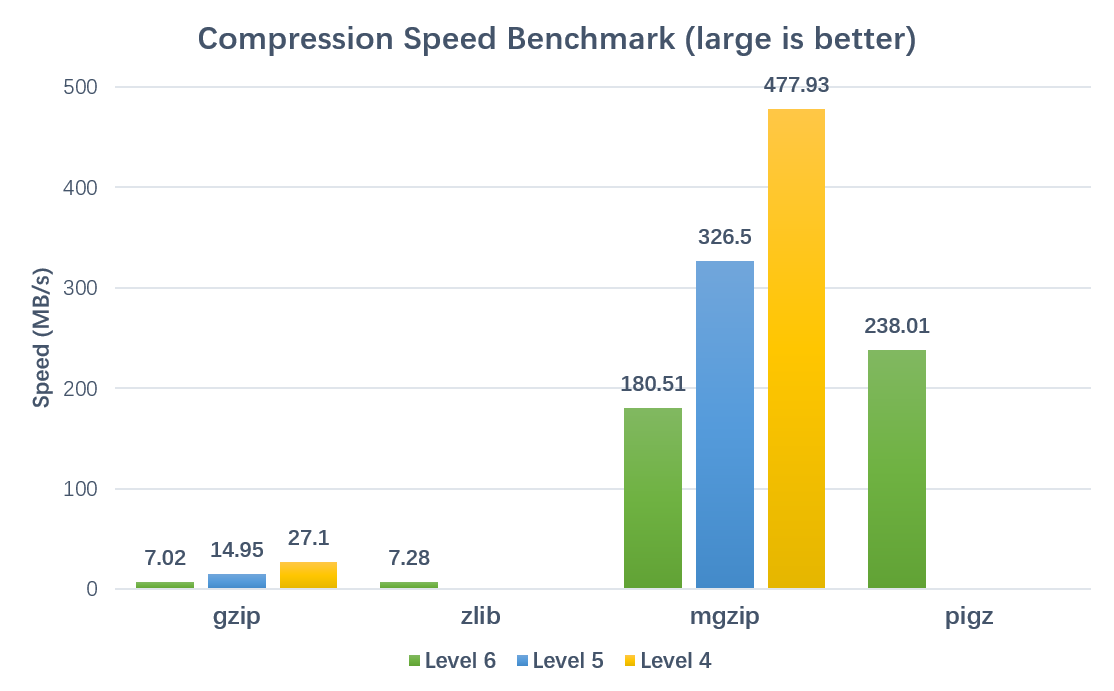

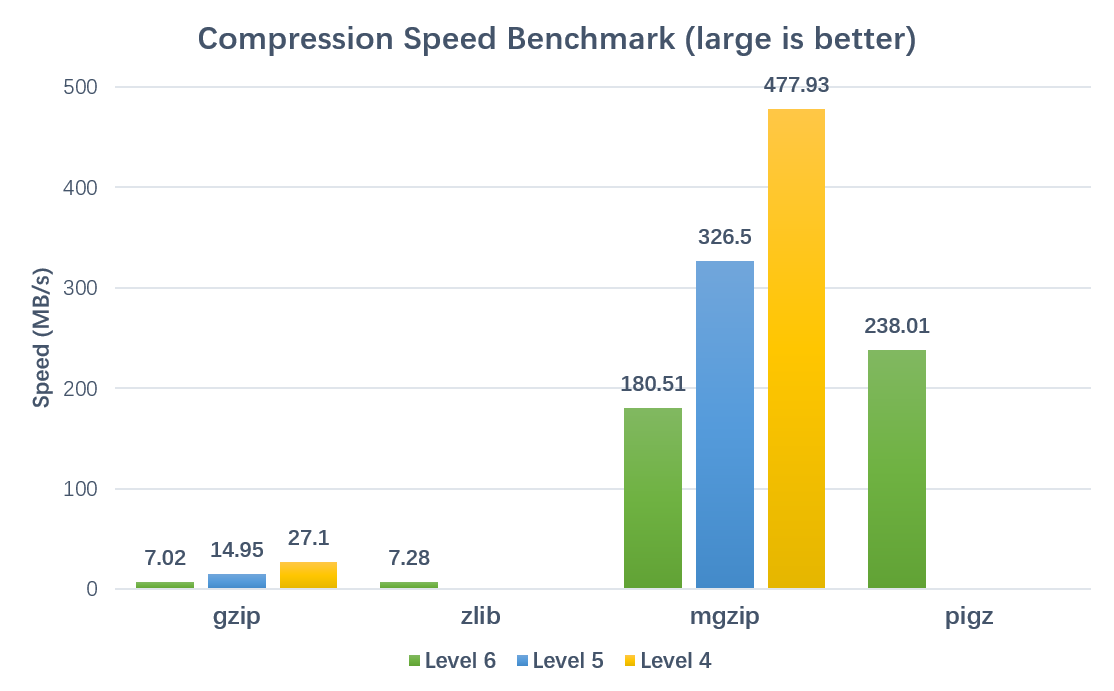

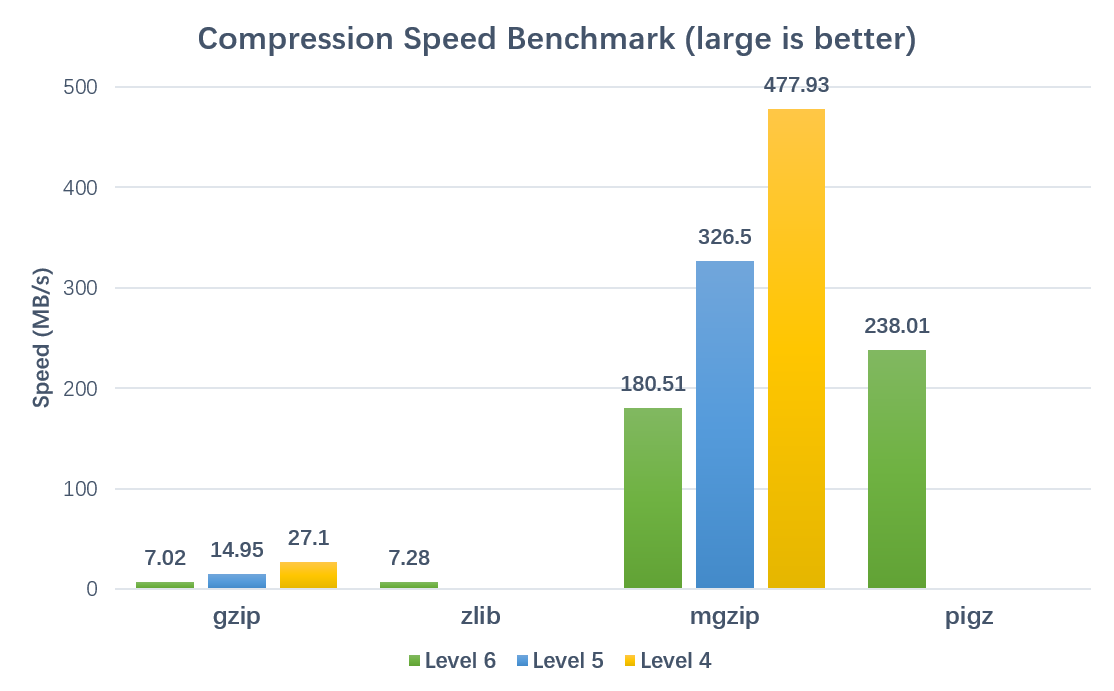

## Performance

### Compression:

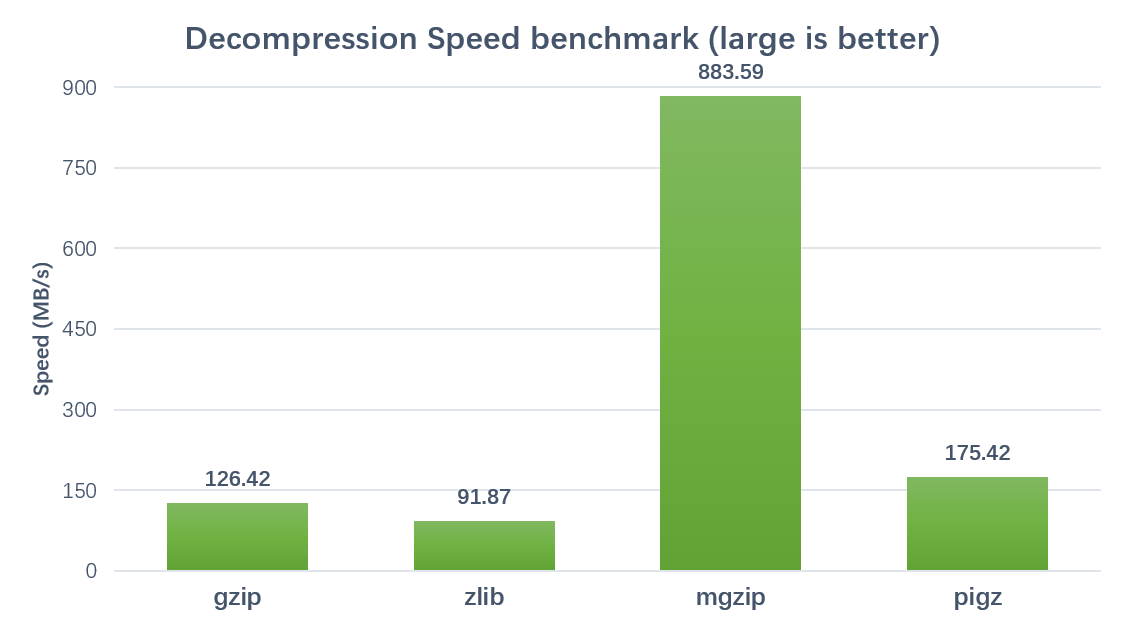

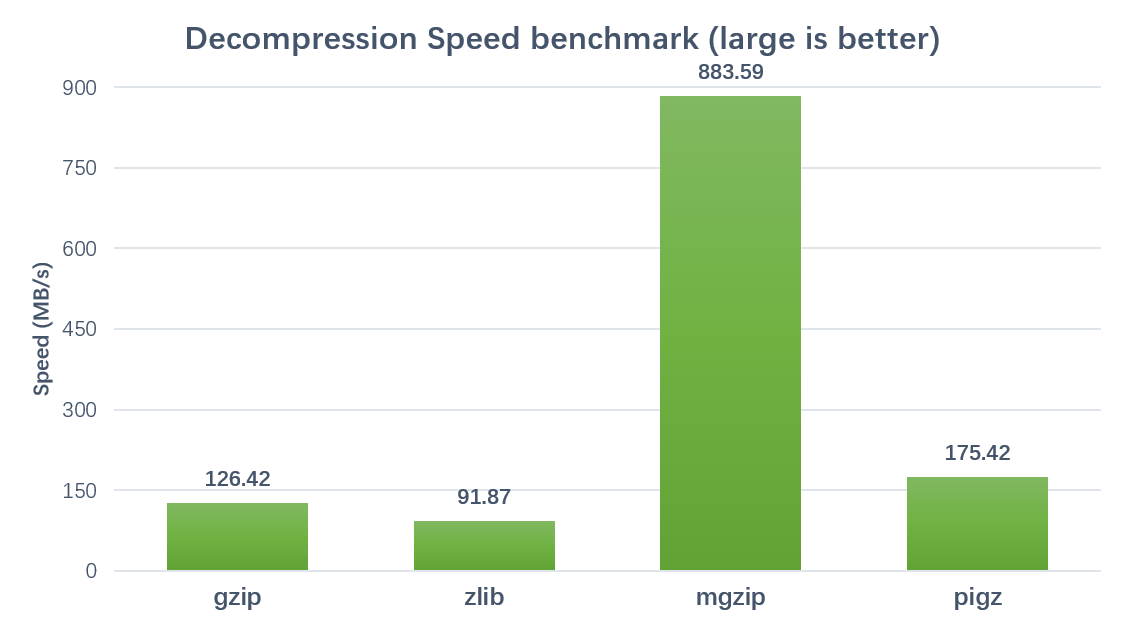

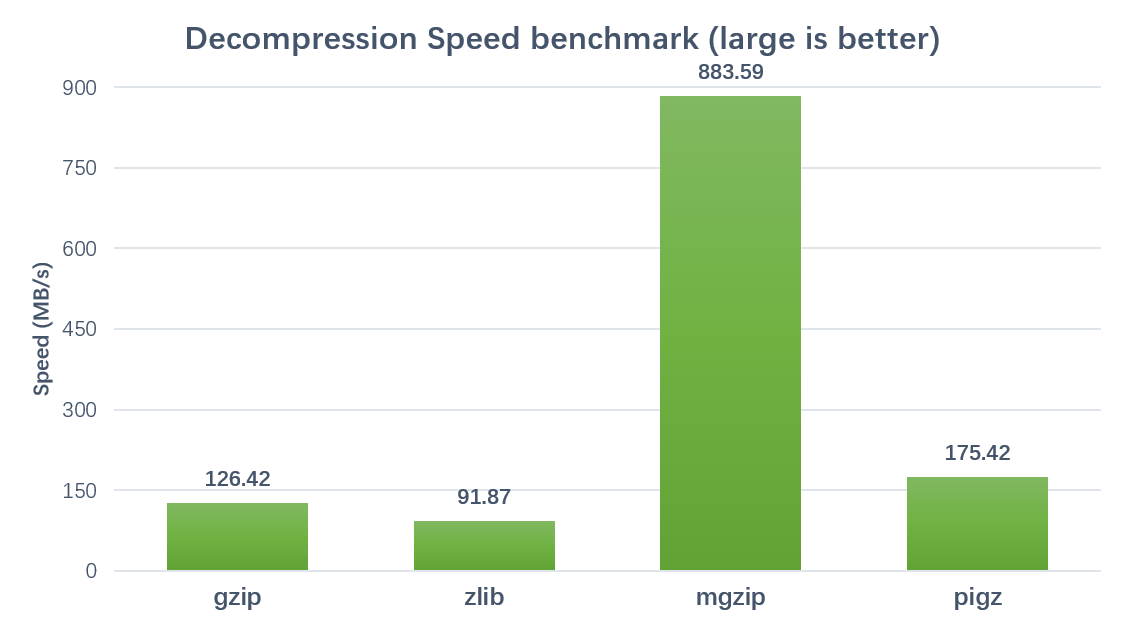

### Decompression:

*Brenchmarked on a 24 cores, 48 threads server (Xeon(R) CPU E5-2650 v4 @ 2.20GHz) with 8.0GB FASTQ text file.*

*Using parameters thread=42 and blocksize=200000000*

## Warning

**This package only replace the 'GzipFile' class and 'open', 'compress', 'decompress' functions of standard gzip module. It is not well tested for other class and function.**

**As the first release version, some features are not yet supported, such as seek() and tell(). Any contribution or improvement is appreciated.**

%package -n python3-mgzip

Summary: A multi-threading implementation of Python gzip module

Provides: python-mgzip

BuildRequires: python3-devel

BuildRequires: python3-setuptools

BuildRequires: python3-pip

%description -n python3-mgzip

# mgzip

A multi-threading implement of Python gzip module

Using a block indexed GZIP file format to enable compress and decompress in parallel. This implement use 'FEXTRA' to record the index of compressed member, which is defined in offical GZIP file format specification version 4.3, so it is fully compatible with normal GZIP implement.

This module is **~25X** faster for compression and **~7X** faster for decompression (limited by IO and Python implementation) with a *24 CPUs* computer.

***In theoretical, compression and decompression acceleration should be linear according to the CPU cores. In fact, the performance is limited by IO and program language implementation.***

## Usage

Use same method as gzip module

```python

import mgzip

s = "a big string..."

## Use 8 threads to compress.

## None or 0 means using all CPUs (default)

## Compression block size is set to 200MB

with mgzip.open("test.txt.gz", "wt", thread=8, blocksize=2*10**8) as fw:

fw.write(s)

with mgzip.open("test.txt.gz", "rt", thread=8) as fr:

assert fr.read(len(s)) == s

```

## Performance

### Compression:

### Decompression:

*Brenchmarked on a 24 cores, 48 threads server (Xeon(R) CPU E5-2650 v4 @ 2.20GHz) with 8.0GB FASTQ text file.*

*Using parameters thread=42 and blocksize=200000000*

## Warning

**This package only replace the 'GzipFile' class and 'open', 'compress', 'decompress' functions of standard gzip module. It is not well tested for other class and function.**

**As the first release version, some features are not yet supported, such as seek() and tell(). Any contribution or improvement is appreciated.**

%package help

Summary: Development documents and examples for mgzip

Provides: python3-mgzip-doc

%description help

# mgzip

A multi-threading implement of Python gzip module

Using a block indexed GZIP file format to enable compress and decompress in parallel. This implement use 'FEXTRA' to record the index of compressed member, which is defined in offical GZIP file format specification version 4.3, so it is fully compatible with normal GZIP implement.

This module is **~25X** faster for compression and **~7X** faster for decompression (limited by IO and Python implementation) with a *24 CPUs* computer.

***In theoretical, compression and decompression acceleration should be linear according to the CPU cores. In fact, the performance is limited by IO and program language implementation.***

## Usage

Use same method as gzip module

```python

import mgzip

s = "a big string..."

## Use 8 threads to compress.

## None or 0 means using all CPUs (default)

## Compression block size is set to 200MB

with mgzip.open("test.txt.gz", "wt", thread=8, blocksize=2*10**8) as fw:

fw.write(s)

with mgzip.open("test.txt.gz", "rt", thread=8) as fr:

assert fr.read(len(s)) == s

```

## Performance

### Compression:

### Decompression:

*Brenchmarked on a 24 cores, 48 threads server (Xeon(R) CPU E5-2650 v4 @ 2.20GHz) with 8.0GB FASTQ text file.*

*Using parameters thread=42 and blocksize=200000000*

## Warning

**This package only replace the 'GzipFile' class and 'open', 'compress', 'decompress' functions of standard gzip module. It is not well tested for other class and function.**

**As the first release version, some features are not yet supported, such as seek() and tell(). Any contribution or improvement is appreciated.**

%prep

%autosetup -n mgzip-0.2.1

%build

%py3_build

%install

%py3_install

install -d -m755 %{buildroot}/%{_pkgdocdir}

if [ -d doc ]; then cp -arf doc %{buildroot}/%{_pkgdocdir}; fi

if [ -d docs ]; then cp -arf docs %{buildroot}/%{_pkgdocdir}; fi

if [ -d example ]; then cp -arf example %{buildroot}/%{_pkgdocdir}; fi

if [ -d examples ]; then cp -arf examples %{buildroot}/%{_pkgdocdir}; fi

pushd %{buildroot}

if [ -d usr/lib ]; then

find usr/lib -type f -printf "/%h/%f\n" >> filelist.lst

fi

if [ -d usr/lib64 ]; then

find usr/lib64 -type f -printf "/%h/%f\n" >> filelist.lst

fi

if [ -d usr/bin ]; then

find usr/bin -type f -printf "/%h/%f\n" >> filelist.lst

fi

if [ -d usr/sbin ]; then

find usr/sbin -type f -printf "/%h/%f\n" >> filelist.lst

fi

touch doclist.lst

if [ -d usr/share/man ]; then

find usr/share/man -type f -printf "/%h/%f.gz\n" >> doclist.lst

fi

popd

mv %{buildroot}/filelist.lst .

mv %{buildroot}/doclist.lst .

%files -n python3-mgzip -f filelist.lst

%dir %{python3_sitelib}/*

%files help -f doclist.lst

%{_docdir}/*

%changelog

* Sun Apr 23 2023 Python_Bot <Python_Bot@openeuler.org> - 0.2.1-1

- Package Spec generated

|