1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

441

442

443

444

445

446

447

448

449

450

451

452

453

454

455

456

457

458

459

460

461

462

463

464

465

466

467

468

469

470

471

472

473

474

475

476

477

478

479

480

481

482

483

484

485

486

487

488

489

490

491

492

493

494

495

496

497

498

499

500

501

502

503

504

505

506

507

508

509

510

511

512

513

514

515

516

517

518

519

520

521

522

523

524

525

526

527

528

529

530

531

532

533

534

535

536

537

538

539

540

541

542

543

544

545

546

547

548

549

550

551

552

553

554

555

556

557

558

559

560

561

562

563

564

565

566

567

568

569

570

571

572

573

574

575

576

577

578

579

580

581

582

583

584

585

586

587

588

589

590

591

592

593

594

595

596

597

598

599

600

601

602

603

604

605

606

607

608

609

610

611

612

613

614

615

616

617

618

619

620

621

622

623

624

625

626

627

628

629

630

631

632

633

634

635

636

637

638

639

640

641

642

643

644

645

646

647

648

649

650

651

652

653

654

655

656

657

658

659

660

661

662

663

664

665

666

667

668

669

670

671

672

673

674

675

676

677

678

679

680

681

682

683

684

685

686

687

688

689

690

691

692

693

694

695

696

697

698

699

700

701

702

703

704

705

706

707

708

709

710

711

712

713

714

715

716

717

718

719

720

721

722

723

724

725

726

727

728

729

730

731

732

733

734

735

736

737

738

739

740

741

742

743

744

745

746

747

748

749

750

751

752

753

754

755

756

757

758

759

760

761

762

763

764

765

766

767

768

769

770

771

772

773

774

775

776

777

778

779

780

781

782

783

784

785

786

787

788

789

790

791

792

793

794

795

796

797

798

799

800

801

802

803

804

805

806

807

808

809

810

811

812

813

814

815

816

817

818

819

820

821

822

823

824

825

826

827

828

829

830

831

832

833

834

835

836

837

838

839

840

841

842

843

844

845

846

847

848

849

850

851

852

853

854

855

856

857

858

859

860

861

862

863

864

865

866

867

868

869

870

871

872

873

874

875

876

877

878

879

880

881

882

883

884

885

886

887

888

889

890

891

892

893

894

895

896

897

898

899

900

901

902

903

904

905

906

907

908

909

910

911

912

913

914

915

916

917

918

919

920

921

922

923

924

925

926

927

928

929

930

931

932

933

934

935

936

937

938

939

940

941

942

943

944

945

946

947

948

949

950

951

952

953

954

955

956

957

958

959

960

961

962

963

964

965

966

967

968

969

970

971

972

973

974

975

976

977

978

979

980

981

982

983

984

985

986

987

988

989

990

991

992

993

994

995

996

997

998

999

1000

1001

1002

1003

1004

1005

1006

1007

|

%global _empty_manifest_terminate_build 0

Name: python-pyabcranger

Version: 0.0.69

Release: 1

Summary: ABC random forests for model choice and parameter estimation, python wrapper

License: MIT License

URL: https://github.com/diyabc/abcranger

Source0: https://mirrors.aliyun.com/pypi/web/packages/47/37/21ddd826ccf085c31879705a0b283dd3f16e0712ce8563d325e786b7ed7b/pyabcranger-0.0.69.tar.gz

%description

- <a href="#python" id="toc-python">Python</a>

- <a href="#usage" id="toc-usage">Usage</a>

- <a href="#model-choice" id="toc-model-choice">Model Choice</a>

- <a href="#parameter-estimation" id="toc-parameter-estimation">Parameter

Estimation</a>

- <a href="#various" id="toc-various">Various</a>

- <a href="#todo" id="toc-todo">TODO</a>

- <a href="#references" id="toc-references">References</a>

<!-- pandoc -f markdown README-ORIG.md -t gfm -o README.md --citeproc -s --toc --toc-depth=1 --webtex -->

[](https://pypi.python.org/pypi/pyabcranger)

[](https://github.com/diyabc/abcranger/actions?query=workflow%3Aabcranger-build+branch%3Amaster)

Random forests methodologies for :

- ABC model choice ([Pudlo et al. 2015](#ref-pudlo2015reliable))

- ABC Bayesian parameter inference ([Raynal et al.

2018](#ref-raynal2016abc))

Libraries we use :

- [Ranger](https://github.com/imbs-hl/ranger) ([Wright and Ziegler

2015](#ref-wright2015ranger)) : we use our own fork and have tuned

forests to do “online”[^1] computations (Growing trees AND making

predictions in the same pass, which removes the need of in-memory

storage of the whole forest)[^2].

- [Eigen3](http://eigen.tuxfamily.org) ([Guennebaud, Jacob, et al.

2010](#ref-eigenweb))

As a mention, we use our own implementation of LDA and PLS from

([Friedman, Hastie, and Tibshirani 2001, 1:81,

114](#ref-friedman2001elements)), PLS is optimized for univariate, see

[5.1](#sec-plsalgo). For linear algebra optimization purposes on large

reftables, the Linux version of binaries (standalone and python wheel)

are statically linked with [Intel’s Math Kernel

Library](https://www.intel.com/content/www/us/en/develop/documentation/oneapi-programming-guide/top/api-based-programming/intel-oneapi-math-kernel-library-onemkl.html),

in order to leverage multicore and SIMD extensions on modern cpus.

There is one set of binaries, which contains a Macos/Linux/Windows (x64

only) binary for each platform. There are available within the

“[Releases](https://github.com/fradav/abcranger/releases)” tab, under

“Assets” section (unfold it to see the list).

This is pure command line binary, and they are no prerequisites or

library dependencies in order to run it. Just download them and launch

them from your terminal software of choice. The usual caveats with

command line executable apply there : if you’re not proficient with the

command line interface of your platform, please learn some basics or ask

someone who might help you in those matters.

The standalone is part of a specialized Population Genetics graphical

interface [DIYABC-RF](https://diyabc.github.io/), presented in MER

(Molecular Ecology Resources, Special Issue), ([Collin et al.

2021](#ref-Collin_2021)).

# Python

## Installation

``` bash

pip install pyabcranger

```

## Notebooks examples

- On a [toy example with

")](https://github.com/diyabc/abcranger/blob/master/notebooks/Toy%20example%20MA(q).ipynb),

using ([Lintusaari et al. 2018](#ref-JMLR:v19:17-374)) as

graph-powered engine.

- [Population genetics

demo](https://github.com/diyabc/abcranger/blob/master/notebooks/Population%20genetics%20Demo.ipynb),

data from ([Collin et al. 2021](#ref-Collin_2021)), available

[there](https://github.com/diyabc/diyabc/tree/master/diyabc-tests/MER/modelchoice/IndSeq)

# Usage

``` text

- ABC Random Forest - Model choice or parameter estimation command line options

Usage:

-h, --header arg Header file (default: headerRF.txt)

-r, --reftable arg Reftable file (default: reftableRF.bin)

-b, --statobs arg Statobs file (default: statobsRF.txt)

-o, --output arg Prefix output (modelchoice_out or estimparam_out by

default)

-n, --nref arg Number of samples, 0 means all (default: 0)

-m, --minnodesize arg Minimal node size. 0 means 1 for classification or

5 for regression (default: 0)

-t, --ntree arg Number of trees (default: 500)

-j, --threads arg Number of threads, 0 means all (default: 0)

-s, --seed arg Seed, generated by default (default: 0)

-c, --noisecolumns arg Number of noise columns (default: 5)

--nolinear Disable LDA for model choice or PLS for parameter

estimation

--plsmaxvar arg Percentage of maximum explained Y-variance for

retaining pls axis (default: 0.9)

--chosenscen arg Chosen scenario (mandatory for parameter

estimation)

--noob arg number of oob testing samples (mandatory for

parameter estimation)

--parameter arg name of the parameter of interest (mandatory for

parameter estimation)

-g, --groups arg Groups of models

--help Print help

```

- If you provide `--chosenscen`, `--parameter` and `--noob`, parameter

estimation mode is selected.

- Otherwise by default it’s model choice mode.

- Linear additions are LDA for model choice and PLS for parameter

estimation, “–nolinear” options disables them in both case.

# Model Choice

## Example

Example :

`abcranger -t 10000 -j 8`

Header, reftable and statobs files should be in the current directory.

## Groups

With the option `-g` (or `--groups`), you may “group” your models in

several groups splitted . For example if you have six models, labeled

from 1 to 6 \`-g “1,2,3;4,5,6”

## Generated files

Four files are created :

- `modelchoice_out.ooberror` : OOB Error rate vs number of trees (line

number is the number of trees)

- `modelchoice_out.importance` : variables importance (sorted)

- `modelchoice_out.predictions` : votes, prediction and posterior error

rate

- `modelchoice_out.confusion` : OOB Confusion matrix of the classifier

# Parameter Estimation

## Composite parameters

When specifying the parameter (option `--parameter`), one may specify

simple composite parameters as division, addition or multiplication of

two existing parameters. like `t/N` or `T1+T2`.

## A note about PLS heuristic

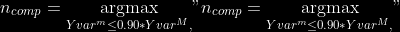

The `--plsmaxvar` option (defaulting at 0.90) fixes the number of

selected pls axes so that we get at least the specified percentage of

maximum explained variance of the output. The explained variance of the

output of the

first

axes is defined by the R-squared of the output:

^2}}{\sum_{i=1}^{N}{(y_{i}-\hat{y})^2}}")

where

is the output

scored by the pls for the

th

component. So, only the

first axis are kept, and :

Note that if you specify 0 as `--plsmaxvar`, an “elbow” heuristic is

activiated where the following condition is tested for every computed

axis :

\left(Yvar^{k+1}-Yvar^ {k}\right)")

If this condition is true for a windows of previous axes, sized to 10%

of the total possible axis, then we stop the PLS axis computation.

In practice, we find this

close enough to the previous

for 99%, but it isn’t guaranteed.

## The signification of the `noob` parameter

The median global/local statistics and confidence intervals (global)

measures for parameter estimation need a number of OOB samples

(`--noob`) to be reliable (typlially 30% of the size of the dataset is

sufficient). Be aware than computing the whole set (i.e. assigning

`--noob` the same than for `--nref`) for weights predictions ([Raynal et

al. 2018](#ref-raynal2016abc)) could be very costly, memory and

cpu-wise, if your dataset is large in number of samples, so it could be

adviseable to compute them for only choose a subset of size `noob`.

## Example (parameter estimation)

Example (working with the dataset in `test/data`) :

`abcranger -t 1000 -j 8 --parameter ra --chosenscen 1 --noob 50`

Header, reftable and statobs files should be in the current directory.

## Generated files (parameter estimation)

Five files (or seven if pls activated) are created :

- `estimparam_out.ooberror` : OOB MSE rate vs number of trees (line

number is the number of trees)

- `estimparam_out.importance` : variables importance (sorted)

- `estimparam_out.predictions` : expectation, variance and 0.05, 0.5,

0.95 quantile for prediction

- `estimparam_out.predweights` : csv of the value/weights pairs of the

prediction (for density plot)

- `estimparam_out.oobstats` : various statistics on oob (MSE, NMSE, NMAE

etc.)

if pls enabled :

- `estimparam_out.plsvar` : variance explained by number of components

- `estimparam_out.plsweights` : variable weight in the first component

(sorted by absolute value)

# Various

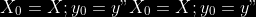

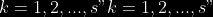

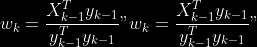

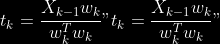

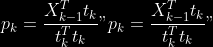

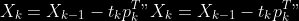

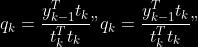

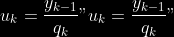

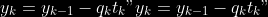

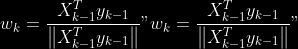

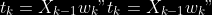

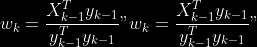

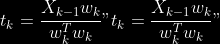

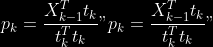

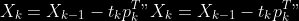

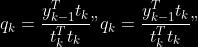

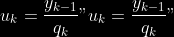

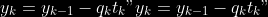

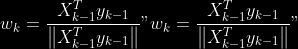

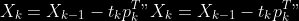

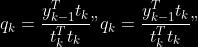

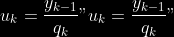

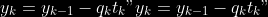

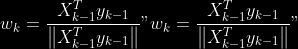

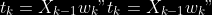

## Partial Least Squares algorithm

1.

2. For

1.

2. Normalize

to

3.

4.

5.

6.

7.

8.

**Comment** When there isn’t any missing data, stages

and

could be replaced by

and

by

To get

so

that

we compute :

^{-1}")

where

![\widetilde{\mathbf{P}}\_{K \times p}=\mathbf{t}\left\[p\_{1}, \ldots, p\_{K}\right\]](https://latex.codecogs.com/png.image?%5Cbg_black&space;%5Cwidetilde%7B%5Cmathbf%7BP%7D%7D_%7BK%20%5Ctimes%20p%7D%3D%5Cmathbf%7Bt%7D%5Cleft%5Bp_%7B1%7D%2C%20%5Cldots%2C%20p_%7BK%7D%5Cright%5D "\widetilde{\mathbf{P}}_{K \times p}=\mathbf{t}\left[p_{1}, \ldots, p_{K}\right]")

where

![\mathbf{W}^{\*}\_{p \times K} = \[w_1, \ldots, w_K\]](https://latex.codecogs.com/png.image?%5Cbg_black&space;%5Cmathbf%7BW%7D%5E%7B%2A%7D_%7Bp%20%5Ctimes%20K%7D%20%3D%20%5Bw_1%2C%20%5Cldots%2C%20w_K%5D "\mathbf{W}^{*}_{p \times K} = [w_1, \ldots, w_K]")

# TODO

## Input/Output

- [x] Integrate hdf5 (or exdir? msgpack?) routines to save/load

reftables/observed stats with associated metadata

- [ ] Provide R code to save/load the data

- [x] Provide Python code to save/load the data

## C++ standalone

- [x] Merge the two methodologies in a single executable with the

(almost) the same options

- [ ] (Optional) Possibly move to another options parser (CLI?)

## External interfaces

- [ ] R package

- [x] Python package

## Documentation

- [ ] Code documentation

- [ ] Document the build

## Continuous integration

- [x] Linux CI build with intel/MKL optimizations

- [x] osX CI build

- [x] Windows CI build

## Long/Mid term TODO

- methodologies parameters auto-tuning

- auto-discovering the optimal number of trees by monitoring OOB error

- auto-limiting number of threads by available memory

- Streamline the two methodologies (model choice and then parameters

estimation)

- Write our own tree/rf implementation with better storage efficiency

than ranger

- Make functional tests for the two methodologies

- Possible to use mondrian forests for online batches ? See

([Lakshminarayanan, Roy, and Teh

2014](#ref-lakshminarayanan2014mondrian))

# References

This have been the subject of a proceedings in [JOBIM

2020](https://jobim2020.sciencesconf.org/),

[PDF](https://hal.archives-ouvertes.fr/hal-02910067v2) and

[video](https://relaiswebcasting.mediasite.com/mediasite/Play/8ddb4e40fc88422481f1494cf6af2bb71d?catalog=e534823f0c954836bf85bfa80af2290921)

(in french), ([Collin et al. 2020](#ref-collin:hal-02910067)).

<div id="refs" class="references csl-bib-body hanging-indent">

<div id="ref-Collin_2021" class="csl-entry">

Collin, François-David, Ghislain Durif, Louis Raynal, Eric Lombaert,

Mathieu Gautier, Renaud Vitalis, Jean-Michel Marin, and Arnaud Estoup.

2021. “Extending Approximate Bayesian Computation with Supervised

Machine Learning to Infer Demographic History from Genetic Polymorphisms

Using DIYABC Random Forest.” *Molecular Ecology Resources* 21 (8):

2598–2613. https://doi.org/<https://doi.org/10.1111/1755-0998.13413>.

</div>

<div id="ref-collin:hal-02910067" class="csl-entry">

Collin, François-David, Arnaud Estoup, Jean-Michel Marin, and Louis

Raynal. 2020. “<span class="nocase">Bringing ABC inference to the

machine learning realm : AbcRanger, an optimized random forests library

for ABC</span>.” In *JOBIM 2020*, 2020:66. JOBIM. Montpellier, France.

<https://hal.archives-ouvertes.fr/hal-02910067>.

</div>

<div id="ref-friedman2001elements" class="csl-entry">

Friedman, Jerome, Trevor Hastie, and Robert Tibshirani. 2001. *The

Elements of Statistical Learning*. Vol. 1. 10. Springer series in

statistics New York, NY, USA:

</div>

<div id="ref-eigenweb" class="csl-entry">

Guennebaud, Gaël, Benoît Jacob, et al. 2010. “Eigen V3.”

http://eigen.tuxfamily.org.

</div>

<div id="ref-lakshminarayanan2014mondrian" class="csl-entry">

Lakshminarayanan, Balaji, Daniel M Roy, and Yee Whye Teh. 2014.

“Mondrian Forests: Efficient Online Random Forests.” In *Advances in

Neural Information Processing Systems*, 3140–48.

</div>

<div id="ref-JMLR:v19:17-374" class="csl-entry">

Lintusaari, Jarno, Henri Vuollekoski, Antti Kangasrääsiö, Kusti Skytén,

Marko Järvenpää, Pekka Marttinen, Michael U. Gutmann, Aki Vehtari, Jukka

Corander, and Samuel Kaski. 2018. “ELFI: Engine for Likelihood-Free

Inference.” *Journal of Machine Learning Research* 19 (16): 1–7.

<http://jmlr.org/papers/v19/17-374.html>.

</div>

<div id="ref-pudlo2015reliable" class="csl-entry">

Pudlo, Pierre, Jean-Michel Marin, Arnaud Estoup, Jean-Marie Cornuet,

Mathieu Gautier, and Christian P Robert. 2015. “Reliable ABC Model

Choice via Random Forests.” *Bioinformatics* 32 (6): 859–66.

</div>

<div id="ref-raynal2016abc" class="csl-entry">

Raynal, Louis, Jean-Michel Marin, Pierre Pudlo, Mathieu Ribatet,

Christian P Robert, and Arnaud Estoup. 2018. “<span class="nocase">ABC

random forests for Bayesian parameter inference</span>.”

*Bioinformatics* 35 (10): 1720–28.

<https://doi.org/10.1093/bioinformatics/bty867>.

</div>

<div id="ref-wright2015ranger" class="csl-entry">

Wright, Marvin N, and Andreas Ziegler. 2015. “Ranger: A Fast

Implementation of Random Forests for High Dimensional Data in c++ and

r.” *arXiv Preprint arXiv:1508.04409*.

</div>

</div>

[^1]: The term “online” there and in the code has not the usual meaning

it has, as coined in “online machine learning”. We still need the

entire training data set at once. Our implementation is an “online”

one not by the sequential order of the input data, but by the

sequential order of computation of the trees in random forests,

sequentially computed and then discarded.

[^2]: We only use the C++ Core of ranger, which is under [MIT

License](https://raw.githubusercontent.com/imbs-hl/ranger/master/cpp_version/COPYING),

same as ours.

%package -n python3-pyabcranger

Summary: ABC random forests for model choice and parameter estimation, python wrapper

Provides: python-pyabcranger

BuildRequires: python3-devel

BuildRequires: python3-setuptools

BuildRequires: python3-pip

BuildRequires: python3-cffi

BuildRequires: gcc

BuildRequires: gdb

%description -n python3-pyabcranger

- <a href="#python" id="toc-python">Python</a>

- <a href="#usage" id="toc-usage">Usage</a>

- <a href="#model-choice" id="toc-model-choice">Model Choice</a>

- <a href="#parameter-estimation" id="toc-parameter-estimation">Parameter

Estimation</a>

- <a href="#various" id="toc-various">Various</a>

- <a href="#todo" id="toc-todo">TODO</a>

- <a href="#references" id="toc-references">References</a>

<!-- pandoc -f markdown README-ORIG.md -t gfm -o README.md --citeproc -s --toc --toc-depth=1 --webtex -->

[](https://pypi.python.org/pypi/pyabcranger)

[](https://github.com/diyabc/abcranger/actions?query=workflow%3Aabcranger-build+branch%3Amaster)

Random forests methodologies for :

- ABC model choice ([Pudlo et al. 2015](#ref-pudlo2015reliable))

- ABC Bayesian parameter inference ([Raynal et al.

2018](#ref-raynal2016abc))

Libraries we use :

- [Ranger](https://github.com/imbs-hl/ranger) ([Wright and Ziegler

2015](#ref-wright2015ranger)) : we use our own fork and have tuned

forests to do “online”[^1] computations (Growing trees AND making

predictions in the same pass, which removes the need of in-memory

storage of the whole forest)[^2].

- [Eigen3](http://eigen.tuxfamily.org) ([Guennebaud, Jacob, et al.

2010](#ref-eigenweb))

As a mention, we use our own implementation of LDA and PLS from

([Friedman, Hastie, and Tibshirani 2001, 1:81,

114](#ref-friedman2001elements)), PLS is optimized for univariate, see

[5.1](#sec-plsalgo). For linear algebra optimization purposes on large

reftables, the Linux version of binaries (standalone and python wheel)

are statically linked with [Intel’s Math Kernel

Library](https://www.intel.com/content/www/us/en/develop/documentation/oneapi-programming-guide/top/api-based-programming/intel-oneapi-math-kernel-library-onemkl.html),

in order to leverage multicore and SIMD extensions on modern cpus.

There is one set of binaries, which contains a Macos/Linux/Windows (x64

only) binary for each platform. There are available within the

“[Releases](https://github.com/fradav/abcranger/releases)” tab, under

“Assets” section (unfold it to see the list).

This is pure command line binary, and they are no prerequisites or

library dependencies in order to run it. Just download them and launch

them from your terminal software of choice. The usual caveats with

command line executable apply there : if you’re not proficient with the

command line interface of your platform, please learn some basics or ask

someone who might help you in those matters.

The standalone is part of a specialized Population Genetics graphical

interface [DIYABC-RF](https://diyabc.github.io/), presented in MER

(Molecular Ecology Resources, Special Issue), ([Collin et al.

2021](#ref-Collin_2021)).

# Python

## Installation

``` bash

pip install pyabcranger

```

## Notebooks examples

- On a [toy example with

")](https://github.com/diyabc/abcranger/blob/master/notebooks/Toy%20example%20MA(q).ipynb),

using ([Lintusaari et al. 2018](#ref-JMLR:v19:17-374)) as

graph-powered engine.

- [Population genetics

demo](https://github.com/diyabc/abcranger/blob/master/notebooks/Population%20genetics%20Demo.ipynb),

data from ([Collin et al. 2021](#ref-Collin_2021)), available

[there](https://github.com/diyabc/diyabc/tree/master/diyabc-tests/MER/modelchoice/IndSeq)

# Usage

``` text

- ABC Random Forest - Model choice or parameter estimation command line options

Usage:

-h, --header arg Header file (default: headerRF.txt)

-r, --reftable arg Reftable file (default: reftableRF.bin)

-b, --statobs arg Statobs file (default: statobsRF.txt)

-o, --output arg Prefix output (modelchoice_out or estimparam_out by

default)

-n, --nref arg Number of samples, 0 means all (default: 0)

-m, --minnodesize arg Minimal node size. 0 means 1 for classification or

5 for regression (default: 0)

-t, --ntree arg Number of trees (default: 500)

-j, --threads arg Number of threads, 0 means all (default: 0)

-s, --seed arg Seed, generated by default (default: 0)

-c, --noisecolumns arg Number of noise columns (default: 5)

--nolinear Disable LDA for model choice or PLS for parameter

estimation

--plsmaxvar arg Percentage of maximum explained Y-variance for

retaining pls axis (default: 0.9)

--chosenscen arg Chosen scenario (mandatory for parameter

estimation)

--noob arg number of oob testing samples (mandatory for

parameter estimation)

--parameter arg name of the parameter of interest (mandatory for

parameter estimation)

-g, --groups arg Groups of models

--help Print help

```

- If you provide `--chosenscen`, `--parameter` and `--noob`, parameter

estimation mode is selected.

- Otherwise by default it’s model choice mode.

- Linear additions are LDA for model choice and PLS for parameter

estimation, “–nolinear” options disables them in both case.

# Model Choice

## Example

Example :

`abcranger -t 10000 -j 8`

Header, reftable and statobs files should be in the current directory.

## Groups

With the option `-g` (or `--groups`), you may “group” your models in

several groups splitted . For example if you have six models, labeled

from 1 to 6 \`-g “1,2,3;4,5,6”

## Generated files

Four files are created :

- `modelchoice_out.ooberror` : OOB Error rate vs number of trees (line

number is the number of trees)

- `modelchoice_out.importance` : variables importance (sorted)

- `modelchoice_out.predictions` : votes, prediction and posterior error

rate

- `modelchoice_out.confusion` : OOB Confusion matrix of the classifier

# Parameter Estimation

## Composite parameters

When specifying the parameter (option `--parameter`), one may specify

simple composite parameters as division, addition or multiplication of

two existing parameters. like `t/N` or `T1+T2`.

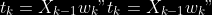

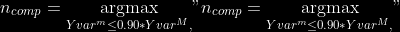

## A note about PLS heuristic

The `--plsmaxvar` option (defaulting at 0.90) fixes the number of

selected pls axes so that we get at least the specified percentage of

maximum explained variance of the output. The explained variance of the

output of the

first

axes is defined by the R-squared of the output:

^2}}{\sum_{i=1}^{N}{(y_{i}-\hat{y})^2}}")

where

is the output

scored by the pls for the

th

component. So, only the

first axis are kept, and :

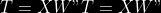

Note that if you specify 0 as `--plsmaxvar`, an “elbow” heuristic is

activiated where the following condition is tested for every computed

axis :

\left(Yvar^{k+1}-Yvar^ {k}\right)")

If this condition is true for a windows of previous axes, sized to 10%

of the total possible axis, then we stop the PLS axis computation.

In practice, we find this

close enough to the previous

for 99%, but it isn’t guaranteed.

## The signification of the `noob` parameter

The median global/local statistics and confidence intervals (global)

measures for parameter estimation need a number of OOB samples

(`--noob`) to be reliable (typlially 30% of the size of the dataset is

sufficient). Be aware than computing the whole set (i.e. assigning

`--noob` the same than for `--nref`) for weights predictions ([Raynal et

al. 2018](#ref-raynal2016abc)) could be very costly, memory and

cpu-wise, if your dataset is large in number of samples, so it could be

adviseable to compute them for only choose a subset of size `noob`.

## Example (parameter estimation)

Example (working with the dataset in `test/data`) :

`abcranger -t 1000 -j 8 --parameter ra --chosenscen 1 --noob 50`

Header, reftable and statobs files should be in the current directory.

## Generated files (parameter estimation)

Five files (or seven if pls activated) are created :

- `estimparam_out.ooberror` : OOB MSE rate vs number of trees (line

number is the number of trees)

- `estimparam_out.importance` : variables importance (sorted)

- `estimparam_out.predictions` : expectation, variance and 0.05, 0.5,

0.95 quantile for prediction

- `estimparam_out.predweights` : csv of the value/weights pairs of the

prediction (for density plot)

- `estimparam_out.oobstats` : various statistics on oob (MSE, NMSE, NMAE

etc.)

if pls enabled :

- `estimparam_out.plsvar` : variance explained by number of components

- `estimparam_out.plsweights` : variable weight in the first component

(sorted by absolute value)

# Various

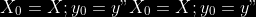

## Partial Least Squares algorithm

1.

2. For

1.

2. Normalize

to

3.

4.

5.

6.

7.

8.

**Comment** When there isn’t any missing data, stages

and

could be replaced by

and

by

To get

so

that

we compute :

^{-1}")

where

![\widetilde{\mathbf{P}}\_{K \times p}=\mathbf{t}\left\[p\_{1}, \ldots, p\_{K}\right\]](https://latex.codecogs.com/png.image?%5Cbg_black&space;%5Cwidetilde%7B%5Cmathbf%7BP%7D%7D_%7BK%20%5Ctimes%20p%7D%3D%5Cmathbf%7Bt%7D%5Cleft%5Bp_%7B1%7D%2C%20%5Cldots%2C%20p_%7BK%7D%5Cright%5D "\widetilde{\mathbf{P}}_{K \times p}=\mathbf{t}\left[p_{1}, \ldots, p_{K}\right]")

where

![\mathbf{W}^{\*}\_{p \times K} = \[w_1, \ldots, w_K\]](https://latex.codecogs.com/png.image?%5Cbg_black&space;%5Cmathbf%7BW%7D%5E%7B%2A%7D_%7Bp%20%5Ctimes%20K%7D%20%3D%20%5Bw_1%2C%20%5Cldots%2C%20w_K%5D "\mathbf{W}^{*}_{p \times K} = [w_1, \ldots, w_K]")

# TODO

## Input/Output

- [x] Integrate hdf5 (or exdir? msgpack?) routines to save/load

reftables/observed stats with associated metadata

- [ ] Provide R code to save/load the data

- [x] Provide Python code to save/load the data

## C++ standalone

- [x] Merge the two methodologies in a single executable with the

(almost) the same options

- [ ] (Optional) Possibly move to another options parser (CLI?)

## External interfaces

- [ ] R package

- [x] Python package

## Documentation

- [ ] Code documentation

- [ ] Document the build

## Continuous integration

- [x] Linux CI build with intel/MKL optimizations

- [x] osX CI build

- [x] Windows CI build

## Long/Mid term TODO

- methodologies parameters auto-tuning

- auto-discovering the optimal number of trees by monitoring OOB error

- auto-limiting number of threads by available memory

- Streamline the two methodologies (model choice and then parameters

estimation)

- Write our own tree/rf implementation with better storage efficiency

than ranger

- Make functional tests for the two methodologies

- Possible to use mondrian forests for online batches ? See

([Lakshminarayanan, Roy, and Teh

2014](#ref-lakshminarayanan2014mondrian))

# References

This have been the subject of a proceedings in [JOBIM

2020](https://jobim2020.sciencesconf.org/),

[PDF](https://hal.archives-ouvertes.fr/hal-02910067v2) and

[video](https://relaiswebcasting.mediasite.com/mediasite/Play/8ddb4e40fc88422481f1494cf6af2bb71d?catalog=e534823f0c954836bf85bfa80af2290921)

(in french), ([Collin et al. 2020](#ref-collin:hal-02910067)).

<div id="refs" class="references csl-bib-body hanging-indent">

<div id="ref-Collin_2021" class="csl-entry">

Collin, François-David, Ghislain Durif, Louis Raynal, Eric Lombaert,

Mathieu Gautier, Renaud Vitalis, Jean-Michel Marin, and Arnaud Estoup.

2021. “Extending Approximate Bayesian Computation with Supervised

Machine Learning to Infer Demographic History from Genetic Polymorphisms

Using DIYABC Random Forest.” *Molecular Ecology Resources* 21 (8):

2598–2613. https://doi.org/<https://doi.org/10.1111/1755-0998.13413>.

</div>

<div id="ref-collin:hal-02910067" class="csl-entry">

Collin, François-David, Arnaud Estoup, Jean-Michel Marin, and Louis

Raynal. 2020. “<span class="nocase">Bringing ABC inference to the

machine learning realm : AbcRanger, an optimized random forests library

for ABC</span>.” In *JOBIM 2020*, 2020:66. JOBIM. Montpellier, France.

<https://hal.archives-ouvertes.fr/hal-02910067>.

</div>

<div id="ref-friedman2001elements" class="csl-entry">

Friedman, Jerome, Trevor Hastie, and Robert Tibshirani. 2001. *The

Elements of Statistical Learning*. Vol. 1. 10. Springer series in

statistics New York, NY, USA:

</div>

<div id="ref-eigenweb" class="csl-entry">

Guennebaud, Gaël, Benoît Jacob, et al. 2010. “Eigen V3.”

http://eigen.tuxfamily.org.

</div>

<div id="ref-lakshminarayanan2014mondrian" class="csl-entry">

Lakshminarayanan, Balaji, Daniel M Roy, and Yee Whye Teh. 2014.

“Mondrian Forests: Efficient Online Random Forests.” In *Advances in

Neural Information Processing Systems*, 3140–48.

</div>

<div id="ref-JMLR:v19:17-374" class="csl-entry">

Lintusaari, Jarno, Henri Vuollekoski, Antti Kangasrääsiö, Kusti Skytén,

Marko Järvenpää, Pekka Marttinen, Michael U. Gutmann, Aki Vehtari, Jukka

Corander, and Samuel Kaski. 2018. “ELFI: Engine for Likelihood-Free

Inference.” *Journal of Machine Learning Research* 19 (16): 1–7.

<http://jmlr.org/papers/v19/17-374.html>.

</div>

<div id="ref-pudlo2015reliable" class="csl-entry">

Pudlo, Pierre, Jean-Michel Marin, Arnaud Estoup, Jean-Marie Cornuet,

Mathieu Gautier, and Christian P Robert. 2015. “Reliable ABC Model

Choice via Random Forests.” *Bioinformatics* 32 (6): 859–66.

</div>

<div id="ref-raynal2016abc" class="csl-entry">

Raynal, Louis, Jean-Michel Marin, Pierre Pudlo, Mathieu Ribatet,

Christian P Robert, and Arnaud Estoup. 2018. “<span class="nocase">ABC

random forests for Bayesian parameter inference</span>.”

*Bioinformatics* 35 (10): 1720–28.

<https://doi.org/10.1093/bioinformatics/bty867>.

</div>

<div id="ref-wright2015ranger" class="csl-entry">

Wright, Marvin N, and Andreas Ziegler. 2015. “Ranger: A Fast

Implementation of Random Forests for High Dimensional Data in c++ and

r.” *arXiv Preprint arXiv:1508.04409*.

</div>

</div>

[^1]: The term “online” there and in the code has not the usual meaning

it has, as coined in “online machine learning”. We still need the

entire training data set at once. Our implementation is an “online”

one not by the sequential order of the input data, but by the

sequential order of computation of the trees in random forests,

sequentially computed and then discarded.

[^2]: We only use the C++ Core of ranger, which is under [MIT

License](https://raw.githubusercontent.com/imbs-hl/ranger/master/cpp_version/COPYING),

same as ours.

%package help

Summary: Development documents and examples for pyabcranger

Provides: python3-pyabcranger-doc

%description help

- <a href="#python" id="toc-python">Python</a>

- <a href="#usage" id="toc-usage">Usage</a>

- <a href="#model-choice" id="toc-model-choice">Model Choice</a>

- <a href="#parameter-estimation" id="toc-parameter-estimation">Parameter

Estimation</a>

- <a href="#various" id="toc-various">Various</a>

- <a href="#todo" id="toc-todo">TODO</a>

- <a href="#references" id="toc-references">References</a>

<!-- pandoc -f markdown README-ORIG.md -t gfm -o README.md --citeproc -s --toc --toc-depth=1 --webtex -->

[](https://pypi.python.org/pypi/pyabcranger)

[](https://github.com/diyabc/abcranger/actions?query=workflow%3Aabcranger-build+branch%3Amaster)

Random forests methodologies for :

- ABC model choice ([Pudlo et al. 2015](#ref-pudlo2015reliable))

- ABC Bayesian parameter inference ([Raynal et al.

2018](#ref-raynal2016abc))

Libraries we use :

- [Ranger](https://github.com/imbs-hl/ranger) ([Wright and Ziegler

2015](#ref-wright2015ranger)) : we use our own fork and have tuned

forests to do “online”[^1] computations (Growing trees AND making

predictions in the same pass, which removes the need of in-memory

storage of the whole forest)[^2].

- [Eigen3](http://eigen.tuxfamily.org) ([Guennebaud, Jacob, et al.

2010](#ref-eigenweb))

As a mention, we use our own implementation of LDA and PLS from

([Friedman, Hastie, and Tibshirani 2001, 1:81,

114](#ref-friedman2001elements)), PLS is optimized for univariate, see

[5.1](#sec-plsalgo). For linear algebra optimization purposes on large

reftables, the Linux version of binaries (standalone and python wheel)

are statically linked with [Intel’s Math Kernel

Library](https://www.intel.com/content/www/us/en/develop/documentation/oneapi-programming-guide/top/api-based-programming/intel-oneapi-math-kernel-library-onemkl.html),

in order to leverage multicore and SIMD extensions on modern cpus.

There is one set of binaries, which contains a Macos/Linux/Windows (x64

only) binary for each platform. There are available within the

“[Releases](https://github.com/fradav/abcranger/releases)” tab, under

“Assets” section (unfold it to see the list).

This is pure command line binary, and they are no prerequisites or

library dependencies in order to run it. Just download them and launch

them from your terminal software of choice. The usual caveats with

command line executable apply there : if you’re not proficient with the

command line interface of your platform, please learn some basics or ask

someone who might help you in those matters.

The standalone is part of a specialized Population Genetics graphical

interface [DIYABC-RF](https://diyabc.github.io/), presented in MER

(Molecular Ecology Resources, Special Issue), ([Collin et al.

2021](#ref-Collin_2021)).

# Python

## Installation

``` bash

pip install pyabcranger

```

## Notebooks examples

- On a [toy example with

")](https://github.com/diyabc/abcranger/blob/master/notebooks/Toy%20example%20MA(q).ipynb),

using ([Lintusaari et al. 2018](#ref-JMLR:v19:17-374)) as

graph-powered engine.

- [Population genetics

demo](https://github.com/diyabc/abcranger/blob/master/notebooks/Population%20genetics%20Demo.ipynb),

data from ([Collin et al. 2021](#ref-Collin_2021)), available

[there](https://github.com/diyabc/diyabc/tree/master/diyabc-tests/MER/modelchoice/IndSeq)

# Usage

``` text

- ABC Random Forest - Model choice or parameter estimation command line options

Usage:

-h, --header arg Header file (default: headerRF.txt)

-r, --reftable arg Reftable file (default: reftableRF.bin)

-b, --statobs arg Statobs file (default: statobsRF.txt)

-o, --output arg Prefix output (modelchoice_out or estimparam_out by

default)

-n, --nref arg Number of samples, 0 means all (default: 0)

-m, --minnodesize arg Minimal node size. 0 means 1 for classification or

5 for regression (default: 0)

-t, --ntree arg Number of trees (default: 500)

-j, --threads arg Number of threads, 0 means all (default: 0)

-s, --seed arg Seed, generated by default (default: 0)

-c, --noisecolumns arg Number of noise columns (default: 5)

--nolinear Disable LDA for model choice or PLS for parameter

estimation

--plsmaxvar arg Percentage of maximum explained Y-variance for

retaining pls axis (default: 0.9)

--chosenscen arg Chosen scenario (mandatory for parameter

estimation)

--noob arg number of oob testing samples (mandatory for

parameter estimation)

--parameter arg name of the parameter of interest (mandatory for

parameter estimation)

-g, --groups arg Groups of models

--help Print help

```

- If you provide `--chosenscen`, `--parameter` and `--noob`, parameter

estimation mode is selected.

- Otherwise by default it’s model choice mode.

- Linear additions are LDA for model choice and PLS for parameter

estimation, “–nolinear” options disables them in both case.

# Model Choice

## Example

Example :

`abcranger -t 10000 -j 8`

Header, reftable and statobs files should be in the current directory.

## Groups

With the option `-g` (or `--groups`), you may “group” your models in

several groups splitted . For example if you have six models, labeled

from 1 to 6 \`-g “1,2,3;4,5,6”

## Generated files

Four files are created :

- `modelchoice_out.ooberror` : OOB Error rate vs number of trees (line

number is the number of trees)

- `modelchoice_out.importance` : variables importance (sorted)

- `modelchoice_out.predictions` : votes, prediction and posterior error

rate

- `modelchoice_out.confusion` : OOB Confusion matrix of the classifier

# Parameter Estimation

## Composite parameters

When specifying the parameter (option `--parameter`), one may specify

simple composite parameters as division, addition or multiplication of

two existing parameters. like `t/N` or `T1+T2`.

## A note about PLS heuristic

The `--plsmaxvar` option (defaulting at 0.90) fixes the number of

selected pls axes so that we get at least the specified percentage of

maximum explained variance of the output. The explained variance of the

output of the

first

axes is defined by the R-squared of the output:

^2}}{\sum_{i=1}^{N}{(y_{i}-\hat{y})^2}}")

where

is the output

scored by the pls for the

th

component. So, only the

first axis are kept, and :

Note that if you specify 0 as `--plsmaxvar`, an “elbow” heuristic is

activiated where the following condition is tested for every computed

axis :

\left(Yvar^{k+1}-Yvar^ {k}\right)")

If this condition is true for a windows of previous axes, sized to 10%

of the total possible axis, then we stop the PLS axis computation.

In practice, we find this

close enough to the previous

for 99%, but it isn’t guaranteed.

## The signification of the `noob` parameter

The median global/local statistics and confidence intervals (global)

measures for parameter estimation need a number of OOB samples

(`--noob`) to be reliable (typlially 30% of the size of the dataset is

sufficient). Be aware than computing the whole set (i.e. assigning

`--noob` the same than for `--nref`) for weights predictions ([Raynal et

al. 2018](#ref-raynal2016abc)) could be very costly, memory and

cpu-wise, if your dataset is large in number of samples, so it could be

adviseable to compute them for only choose a subset of size `noob`.

## Example (parameter estimation)

Example (working with the dataset in `test/data`) :

`abcranger -t 1000 -j 8 --parameter ra --chosenscen 1 --noob 50`

Header, reftable and statobs files should be in the current directory.

## Generated files (parameter estimation)

Five files (or seven if pls activated) are created :

- `estimparam_out.ooberror` : OOB MSE rate vs number of trees (line

number is the number of trees)

- `estimparam_out.importance` : variables importance (sorted)

- `estimparam_out.predictions` : expectation, variance and 0.05, 0.5,

0.95 quantile for prediction

- `estimparam_out.predweights` : csv of the value/weights pairs of the

prediction (for density plot)

- `estimparam_out.oobstats` : various statistics on oob (MSE, NMSE, NMAE

etc.)

if pls enabled :

- `estimparam_out.plsvar` : variance explained by number of components

- `estimparam_out.plsweights` : variable weight in the first component

(sorted by absolute value)

# Various

## Partial Least Squares algorithm

1.

2. For

1.

2. Normalize

to

3.

4.

5.

6.

7.

8.

**Comment** When there isn’t any missing data, stages

and

could be replaced by

and

by

To get

so

that

we compute :

^{-1}")

where

![\widetilde{\mathbf{P}}\_{K \times p}=\mathbf{t}\left\[p\_{1}, \ldots, p\_{K}\right\]](https://latex.codecogs.com/png.image?%5Cbg_black&space;%5Cwidetilde%7B%5Cmathbf%7BP%7D%7D_%7BK%20%5Ctimes%20p%7D%3D%5Cmathbf%7Bt%7D%5Cleft%5Bp_%7B1%7D%2C%20%5Cldots%2C%20p_%7BK%7D%5Cright%5D "\widetilde{\mathbf{P}}_{K \times p}=\mathbf{t}\left[p_{1}, \ldots, p_{K}\right]")

where

![\mathbf{W}^{\*}\_{p \times K} = \[w_1, \ldots, w_K\]](https://latex.codecogs.com/png.image?%5Cbg_black&space;%5Cmathbf%7BW%7D%5E%7B%2A%7D_%7Bp%20%5Ctimes%20K%7D%20%3D%20%5Bw_1%2C%20%5Cldots%2C%20w_K%5D "\mathbf{W}^{*}_{p \times K} = [w_1, \ldots, w_K]")

# TODO

## Input/Output

- [x] Integrate hdf5 (or exdir? msgpack?) routines to save/load

reftables/observed stats with associated metadata

- [ ] Provide R code to save/load the data

- [x] Provide Python code to save/load the data

## C++ standalone

- [x] Merge the two methodologies in a single executable with the

(almost) the same options

- [ ] (Optional) Possibly move to another options parser (CLI?)

## External interfaces

- [ ] R package

- [x] Python package

## Documentation

- [ ] Code documentation

- [ ] Document the build

## Continuous integration

- [x] Linux CI build with intel/MKL optimizations

- [x] osX CI build

- [x] Windows CI build

## Long/Mid term TODO

- methodologies parameters auto-tuning

- auto-discovering the optimal number of trees by monitoring OOB error

- auto-limiting number of threads by available memory

- Streamline the two methodologies (model choice and then parameters

estimation)

- Write our own tree/rf implementation with better storage efficiency

than ranger

- Make functional tests for the two methodologies

- Possible to use mondrian forests for online batches ? See

([Lakshminarayanan, Roy, and Teh

2014](#ref-lakshminarayanan2014mondrian))

# References

This have been the subject of a proceedings in [JOBIM

2020](https://jobim2020.sciencesconf.org/),

[PDF](https://hal.archives-ouvertes.fr/hal-02910067v2) and

[video](https://relaiswebcasting.mediasite.com/mediasite/Play/8ddb4e40fc88422481f1494cf6af2bb71d?catalog=e534823f0c954836bf85bfa80af2290921)

(in french), ([Collin et al. 2020](#ref-collin:hal-02910067)).

<div id="refs" class="references csl-bib-body hanging-indent">

<div id="ref-Collin_2021" class="csl-entry">

Collin, François-David, Ghislain Durif, Louis Raynal, Eric Lombaert,

Mathieu Gautier, Renaud Vitalis, Jean-Michel Marin, and Arnaud Estoup.

2021. “Extending Approximate Bayesian Computation with Supervised

Machine Learning to Infer Demographic History from Genetic Polymorphisms

Using DIYABC Random Forest.” *Molecular Ecology Resources* 21 (8):

2598–2613. https://doi.org/<https://doi.org/10.1111/1755-0998.13413>.

</div>

<div id="ref-collin:hal-02910067" class="csl-entry">

Collin, François-David, Arnaud Estoup, Jean-Michel Marin, and Louis

Raynal. 2020. “<span class="nocase">Bringing ABC inference to the

machine learning realm : AbcRanger, an optimized random forests library

for ABC</span>.” In *JOBIM 2020*, 2020:66. JOBIM. Montpellier, France.

<https://hal.archives-ouvertes.fr/hal-02910067>.

</div>

<div id="ref-friedman2001elements" class="csl-entry">

Friedman, Jerome, Trevor Hastie, and Robert Tibshirani. 2001. *The

Elements of Statistical Learning*. Vol. 1. 10. Springer series in

statistics New York, NY, USA:

</div>

<div id="ref-eigenweb" class="csl-entry">

Guennebaud, Gaël, Benoît Jacob, et al. 2010. “Eigen V3.”

http://eigen.tuxfamily.org.

</div>

<div id="ref-lakshminarayanan2014mondrian" class="csl-entry">

Lakshminarayanan, Balaji, Daniel M Roy, and Yee Whye Teh. 2014.

“Mondrian Forests: Efficient Online Random Forests.” In *Advances in

Neural Information Processing Systems*, 3140–48.

</div>

<div id="ref-JMLR:v19:17-374" class="csl-entry">

Lintusaari, Jarno, Henri Vuollekoski, Antti Kangasrääsiö, Kusti Skytén,

Marko Järvenpää, Pekka Marttinen, Michael U. Gutmann, Aki Vehtari, Jukka

Corander, and Samuel Kaski. 2018. “ELFI: Engine for Likelihood-Free

Inference.” *Journal of Machine Learning Research* 19 (16): 1–7.

<http://jmlr.org/papers/v19/17-374.html>.

</div>

<div id="ref-pudlo2015reliable" class="csl-entry">

Pudlo, Pierre, Jean-Michel Marin, Arnaud Estoup, Jean-Marie Cornuet,

Mathieu Gautier, and Christian P Robert. 2015. “Reliable ABC Model

Choice via Random Forests.” *Bioinformatics* 32 (6): 859–66.

</div>

<div id="ref-raynal2016abc" class="csl-entry">

Raynal, Louis, Jean-Michel Marin, Pierre Pudlo, Mathieu Ribatet,

Christian P Robert, and Arnaud Estoup. 2018. “<span class="nocase">ABC

random forests for Bayesian parameter inference</span>.”

*Bioinformatics* 35 (10): 1720–28.

<https://doi.org/10.1093/bioinformatics/bty867>.

</div>

<div id="ref-wright2015ranger" class="csl-entry">

Wright, Marvin N, and Andreas Ziegler. 2015. “Ranger: A Fast

Implementation of Random Forests for High Dimensional Data in c++ and

r.” *arXiv Preprint arXiv:1508.04409*.

</div>

</div>

[^1]: The term “online” there and in the code has not the usual meaning

it has, as coined in “online machine learning”. We still need the

entire training data set at once. Our implementation is an “online”

one not by the sequential order of the input data, but by the

sequential order of computation of the trees in random forests,

sequentially computed and then discarded.

[^2]: We only use the C++ Core of ranger, which is under [MIT

License](https://raw.githubusercontent.com/imbs-hl/ranger/master/cpp_version/COPYING),

same as ours.

%prep

%autosetup -n pyabcranger-0.0.69

%build

%py3_build

%install

%py3_install

install -d -m755 %{buildroot}/%{_pkgdocdir}

if [ -d doc ]; then cp -arf doc %{buildroot}/%{_pkgdocdir}; fi

if [ -d docs ]; then cp -arf docs %{buildroot}/%{_pkgdocdir}; fi

if [ -d example ]; then cp -arf example %{buildroot}/%{_pkgdocdir}; fi

if [ -d examples ]; then cp -arf examples %{buildroot}/%{_pkgdocdir}; fi

pushd %{buildroot}

if [ -d usr/lib ]; then

find usr/lib -type f -printf "\"/%h/%f\"\n" >> filelist.lst

fi

if [ -d usr/lib64 ]; then

find usr/lib64 -type f -printf "\"/%h/%f\"\n" >> filelist.lst

fi

if [ -d usr/bin ]; then

find usr/bin -type f -printf "\"/%h/%f\"\n" >> filelist.lst

fi

if [ -d usr/sbin ]; then

find usr/sbin -type f -printf "\"/%h/%f\"\n" >> filelist.lst

fi

touch doclist.lst

if [ -d usr/share/man ]; then

find usr/share/man -type f -printf "\"/%h/%f.gz\"\n" >> doclist.lst

fi

popd

mv %{buildroot}/filelist.lst .

mv %{buildroot}/doclist.lst .

%files -n python3-pyabcranger -f filelist.lst

%dir %{python3_sitearch}/*

%files help -f doclist.lst

%{_docdir}/*

%changelog

* Fri Jun 09 2023 Python_Bot <Python_Bot@openeuler.org> - 0.0.69-1

- Package Spec generated

|