diff options

| author | CoprDistGit <infra@openeuler.org> | 2023-04-11 23:44:27 +0000 |

|---|---|---|

| committer | CoprDistGit <infra@openeuler.org> | 2023-04-11 23:44:27 +0000 |

| commit | 7c4196470148001633fd492e55ee8b150e831fca (patch) | |

| tree | 8d3128ab668d6249f7b40093a87798b9d15317be | |

| parent | c635f0344012a8999e6c6d5a3baf57de0b59a116 (diff) | |

automatic import of python-pypeln

| -rw-r--r-- | .gitignore | 1 | ||||

| -rw-r--r-- | python-pypeln.spec | 501 | ||||

| -rw-r--r-- | sources | 1 |

3 files changed, 503 insertions, 0 deletions

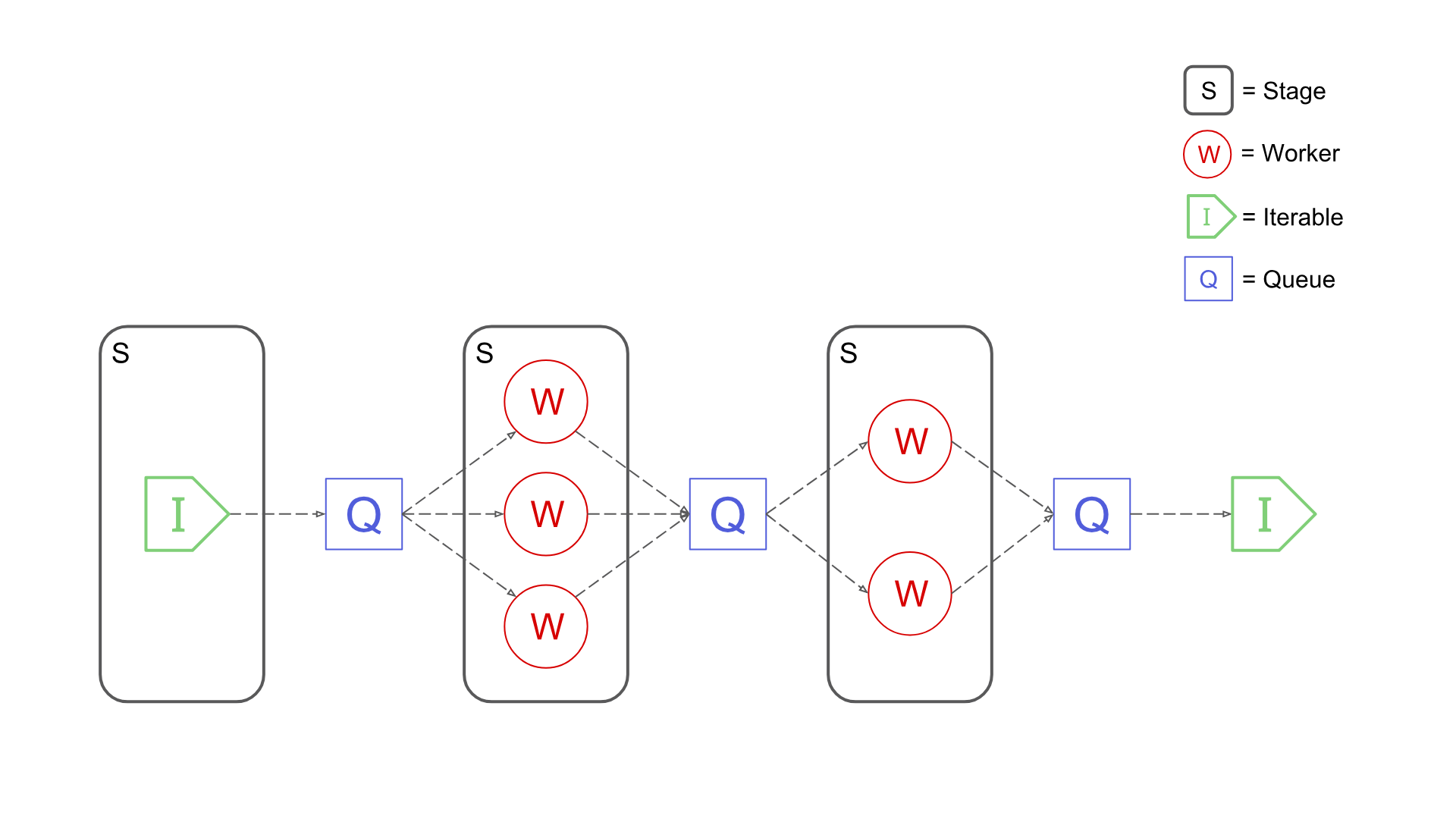

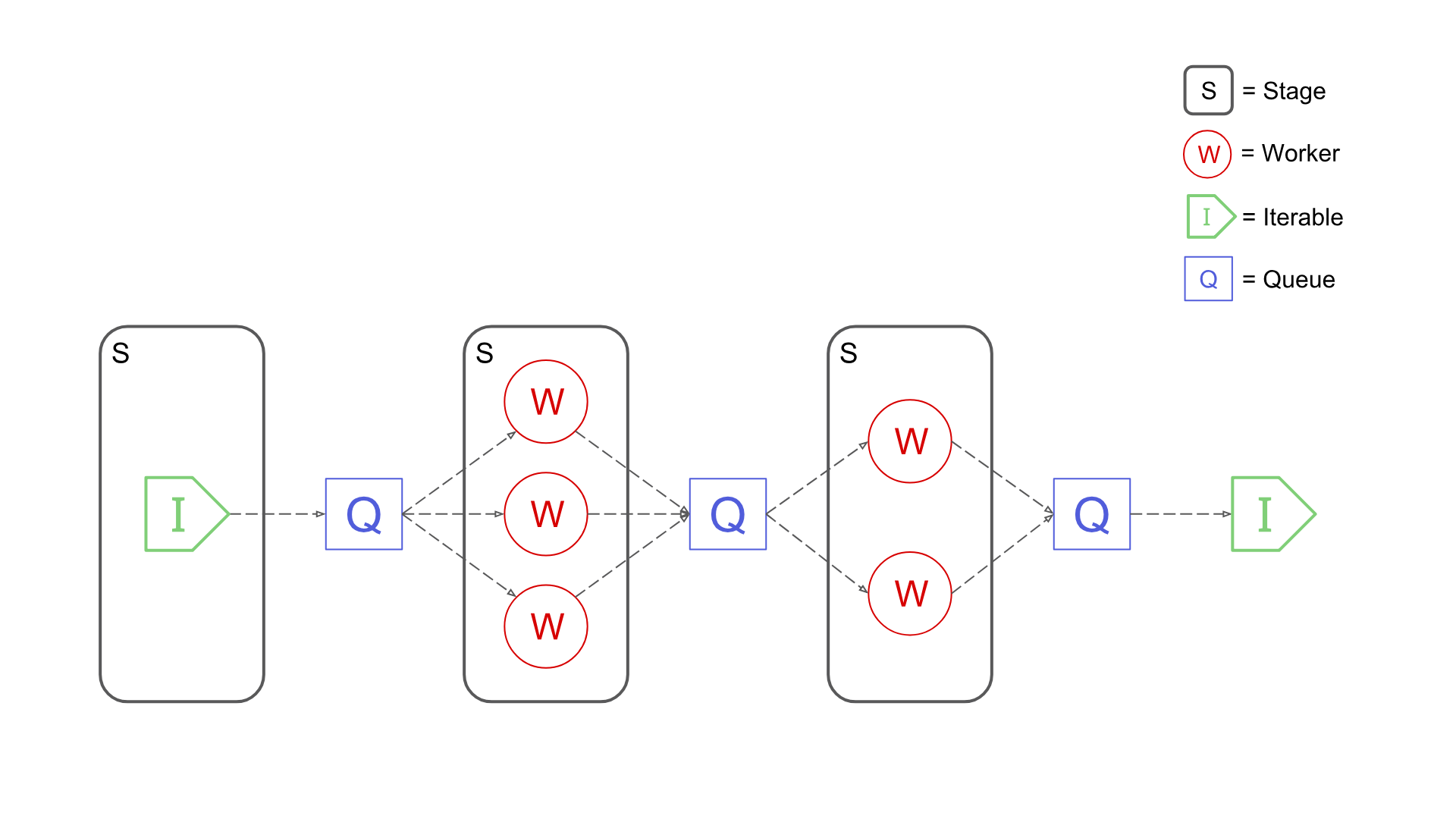

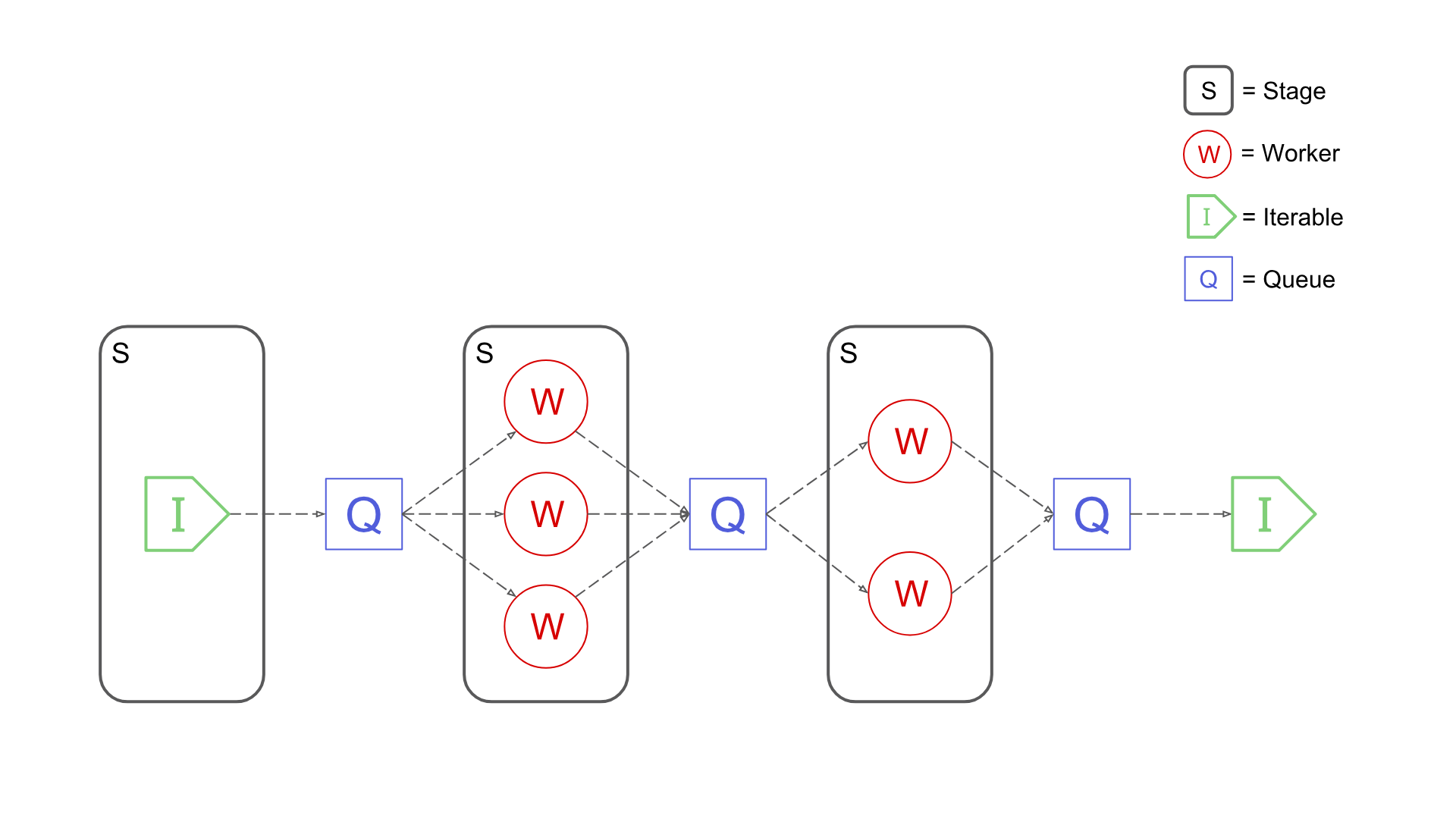

@@ -0,0 +1 @@ +/pypeln-0.4.9.tar.gz diff --git a/python-pypeln.spec b/python-pypeln.spec new file mode 100644 index 0000000..19d564f --- /dev/null +++ b/python-pypeln.spec @@ -0,0 +1,501 @@ +%global _empty_manifest_terminate_build 0 +Name: python-pypeln +Version: 0.4.9 +Release: 1 +Summary: please add a summary manually as the author left a blank one +License: MIT +URL: https://cgarciae.github.io/pypeln +Source0: https://mirrors.nju.edu.cn/pypi/web/packages/da/87/7e4929696a4cf29fede0756d38c5cc08395d91bd7feac8d6072edf0a1ecf/pypeln-0.4.9.tar.gz +BuildArch: noarch + +Requires: python3-stopit +Requires: python3-typing_extensions +Requires: python3-dataclasses + +%description +_Pypeln (pronounced as "pypeline") is a simple yet powerful Python library for creating concurrent data pipelines._ +#### Main Features +* **Simple**: Pypeln was designed to solve _medium_ data tasks that require parallelism and concurrency where using frameworks like Spark or Dask feels exaggerated or unnatural. +* **Easy-to-use**: Pypeln exposes a familiar functional API compatible with regular Python code. +* **Flexible**: Pypeln enables you to build pipelines using Processes, Threads and asyncio.Tasks via the exact same API. +* **Fine-grained Control**: Pypeln allows you to have control over the memory and cpu resources used at each stage of your pipelines. +For more information take a look at the [Documentation](https://cgarciae.github.io/pypeln). + +## Installation +Install Pypeln using pip: +```bash +pip install pypeln +``` +## Basic Usage +With Pypeln you can easily create multi-stage data pipelines using 3 type of workers: +### Processes +You can create a pipeline based on [multiprocessing.Process](https://docs.python.org/3.4/library/multiprocessing.html#multiprocessing.Process) workers by using the `process` module: +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + time.sleep(random()) # <= some slow computation + return x + 1 +def slow_gt3(x): + time.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.process.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.process.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +At each stage the you can specify the numbers of `workers`. The `maxsize` parameter limits the maximum amount of elements that the stage can hold simultaneously. +### Threads +You can create a pipeline based on [threading.Thread](https://docs.python.org/3/library/threading.html#threading.Thread) workers by using the `thread` module: +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + time.sleep(random()) # <= some slow computation + return x + 1 +def slow_gt3(x): + time.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.thread.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.thread.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +Here we have the exact same situation as in the previous case except that the worker are Threads. +### Tasks +You can create a pipeline based on [asyncio.Task](https://docs.python.org/3.4/library/asyncio-task.html#asyncio.Task) workers by using the `task` module: +```python +import pypeln as pl +import asyncio +from random import random +async def slow_add1(x): + await asyncio.sleep(random()) # <= some slow computation + return x + 1 +async def slow_gt3(x): + await asyncio.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.task.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.task.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +Conceptually similar but everything is running in a single thread and Task workers are created dynamically. If the code is running inside an async task can use `await` on the stage instead to avoid blocking: +```python +import pypeln as pl +import asyncio +from random import random +async def slow_add1(x): + await asyncio.sleep(random()) # <= some slow computation + return x + 1 +async def slow_gt3(x): + await asyncio.sleep(random()) # <= some slow computation + return x > 3 +def main(): + data = range(10) # [0, 1, 2, ..., 9] + stage = pl.task.map(slow_add1, data, workers=3, maxsize=4) + stage = pl.task.filter(slow_gt3, stage, workers=2) + data = await stage # e.g. [5, 6, 9, 4, 8, 10, 7] +asyncio.run(main()) +``` +### Sync +The `sync` module implements all operations using synchronous generators. This module is useful for debugging or when you don't need to perform heavy CPU or IO tasks but still want to retain element order information that certain functions like `pl.*.ordered` rely on. +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + return x + 1 +def slow_gt3(x): + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.sync.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.sync.filter(slow_gt3, stage, workers=2) +data = list(stage) # [4, 5, 6, 7, 8, 9, 10] +``` +Common arguments such as `workers` and `maxsize` are accepted by this module's functions for API compatibility purposes but are ignored. +## Mixed Pipelines +You can create pipelines using different worker types such that each type is the best for its given task so you can get the maximum performance out of your code: +```python +data = get_iterable() +data = pl.task.map(f1, data, workers=100) +data = pl.thread.flat_map(f2, data, workers=10) +data = filter(f3, data) +data = pl.process.map(f4, data, workers=5, maxsize=200) +``` +Notice that here we even used a regular python `filter`, since stages are iterables Pypeln integrates smoothly with any python code, just be aware of how each stage behaves. +## Pipe Operator +In the spirit of being a true pipeline library, Pypeln also lets you create your pipelines using the pipe `|` operator: +```python +data = ( + range(10) + | pl.process.map(slow_add1, workers=3, maxsize=4) + | pl.process.filter(slow_gt3, workers=2) + | list +) +``` +## Run Tests +A sample script is provided to run the tests in a container (either Docker or Podman is supported), to run tests: +```bash +$ bash scripts/run-tests.sh +``` +This script can also receive a python version to check test against, i.e +```bash +$ bash scripts/run-tests.sh 3.7 +``` +## Related Stuff +* [Making an Unlimited Number of Requests with Python aiohttp + pypeln](https://medium.com/@cgarciae/making-an-infinite-number-of-requests-with-python-aiohttp-pypeln-3a552b97dc95) +* [Process Pools](https://docs.python.org/3.4/library/multiprocessing.html?highlight=process#module-multiprocessing.pool) +* [Making 100 million requests with Python aiohttp](https://www.artificialworlds.net/blog/2017/06/12/making-100-million-requests-with-python-aiohttp/) +* [Python multiprocessing Queue memory management](https://stackoverflow.com/questions/52286527/python-multiprocessing-queue-memory-management/52286686#52286686) +* [joblib](https://joblib.readthedocs.io/en/latest/) +* [mpipe](https://vmlaker.github.io/mpipe/) +## Contributors +* [cgarciae](https://github.com/cgarciae) +* [Davidnet](https://github.com/Davidnet) +## License +MIT + +%package -n python3-pypeln +Summary: please add a summary manually as the author left a blank one +Provides: python-pypeln +BuildRequires: python3-devel +BuildRequires: python3-setuptools +BuildRequires: python3-pip +%description -n python3-pypeln +_Pypeln (pronounced as "pypeline") is a simple yet powerful Python library for creating concurrent data pipelines._ +#### Main Features +* **Simple**: Pypeln was designed to solve _medium_ data tasks that require parallelism and concurrency where using frameworks like Spark or Dask feels exaggerated or unnatural. +* **Easy-to-use**: Pypeln exposes a familiar functional API compatible with regular Python code. +* **Flexible**: Pypeln enables you to build pipelines using Processes, Threads and asyncio.Tasks via the exact same API. +* **Fine-grained Control**: Pypeln allows you to have control over the memory and cpu resources used at each stage of your pipelines. +For more information take a look at the [Documentation](https://cgarciae.github.io/pypeln). + +## Installation +Install Pypeln using pip: +```bash +pip install pypeln +``` +## Basic Usage +With Pypeln you can easily create multi-stage data pipelines using 3 type of workers: +### Processes +You can create a pipeline based on [multiprocessing.Process](https://docs.python.org/3.4/library/multiprocessing.html#multiprocessing.Process) workers by using the `process` module: +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + time.sleep(random()) # <= some slow computation + return x + 1 +def slow_gt3(x): + time.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.process.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.process.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +At each stage the you can specify the numbers of `workers`. The `maxsize` parameter limits the maximum amount of elements that the stage can hold simultaneously. +### Threads +You can create a pipeline based on [threading.Thread](https://docs.python.org/3/library/threading.html#threading.Thread) workers by using the `thread` module: +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + time.sleep(random()) # <= some slow computation + return x + 1 +def slow_gt3(x): + time.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.thread.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.thread.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +Here we have the exact same situation as in the previous case except that the worker are Threads. +### Tasks +You can create a pipeline based on [asyncio.Task](https://docs.python.org/3.4/library/asyncio-task.html#asyncio.Task) workers by using the `task` module: +```python +import pypeln as pl +import asyncio +from random import random +async def slow_add1(x): + await asyncio.sleep(random()) # <= some slow computation + return x + 1 +async def slow_gt3(x): + await asyncio.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.task.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.task.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +Conceptually similar but everything is running in a single thread and Task workers are created dynamically. If the code is running inside an async task can use `await` on the stage instead to avoid blocking: +```python +import pypeln as pl +import asyncio +from random import random +async def slow_add1(x): + await asyncio.sleep(random()) # <= some slow computation + return x + 1 +async def slow_gt3(x): + await asyncio.sleep(random()) # <= some slow computation + return x > 3 +def main(): + data = range(10) # [0, 1, 2, ..., 9] + stage = pl.task.map(slow_add1, data, workers=3, maxsize=4) + stage = pl.task.filter(slow_gt3, stage, workers=2) + data = await stage # e.g. [5, 6, 9, 4, 8, 10, 7] +asyncio.run(main()) +``` +### Sync +The `sync` module implements all operations using synchronous generators. This module is useful for debugging or when you don't need to perform heavy CPU or IO tasks but still want to retain element order information that certain functions like `pl.*.ordered` rely on. +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + return x + 1 +def slow_gt3(x): + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.sync.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.sync.filter(slow_gt3, stage, workers=2) +data = list(stage) # [4, 5, 6, 7, 8, 9, 10] +``` +Common arguments such as `workers` and `maxsize` are accepted by this module's functions for API compatibility purposes but are ignored. +## Mixed Pipelines +You can create pipelines using different worker types such that each type is the best for its given task so you can get the maximum performance out of your code: +```python +data = get_iterable() +data = pl.task.map(f1, data, workers=100) +data = pl.thread.flat_map(f2, data, workers=10) +data = filter(f3, data) +data = pl.process.map(f4, data, workers=5, maxsize=200) +``` +Notice that here we even used a regular python `filter`, since stages are iterables Pypeln integrates smoothly with any python code, just be aware of how each stage behaves. +## Pipe Operator +In the spirit of being a true pipeline library, Pypeln also lets you create your pipelines using the pipe `|` operator: +```python +data = ( + range(10) + | pl.process.map(slow_add1, workers=3, maxsize=4) + | pl.process.filter(slow_gt3, workers=2) + | list +) +``` +## Run Tests +A sample script is provided to run the tests in a container (either Docker or Podman is supported), to run tests: +```bash +$ bash scripts/run-tests.sh +``` +This script can also receive a python version to check test against, i.e +```bash +$ bash scripts/run-tests.sh 3.7 +``` +## Related Stuff +* [Making an Unlimited Number of Requests with Python aiohttp + pypeln](https://medium.com/@cgarciae/making-an-infinite-number-of-requests-with-python-aiohttp-pypeln-3a552b97dc95) +* [Process Pools](https://docs.python.org/3.4/library/multiprocessing.html?highlight=process#module-multiprocessing.pool) +* [Making 100 million requests with Python aiohttp](https://www.artificialworlds.net/blog/2017/06/12/making-100-million-requests-with-python-aiohttp/) +* [Python multiprocessing Queue memory management](https://stackoverflow.com/questions/52286527/python-multiprocessing-queue-memory-management/52286686#52286686) +* [joblib](https://joblib.readthedocs.io/en/latest/) +* [mpipe](https://vmlaker.github.io/mpipe/) +## Contributors +* [cgarciae](https://github.com/cgarciae) +* [Davidnet](https://github.com/Davidnet) +## License +MIT + +%package help +Summary: Development documents and examples for pypeln +Provides: python3-pypeln-doc +%description help +_Pypeln (pronounced as "pypeline") is a simple yet powerful Python library for creating concurrent data pipelines._ +#### Main Features +* **Simple**: Pypeln was designed to solve _medium_ data tasks that require parallelism and concurrency where using frameworks like Spark or Dask feels exaggerated or unnatural. +* **Easy-to-use**: Pypeln exposes a familiar functional API compatible with regular Python code. +* **Flexible**: Pypeln enables you to build pipelines using Processes, Threads and asyncio.Tasks via the exact same API. +* **Fine-grained Control**: Pypeln allows you to have control over the memory and cpu resources used at each stage of your pipelines. +For more information take a look at the [Documentation](https://cgarciae.github.io/pypeln). + +## Installation +Install Pypeln using pip: +```bash +pip install pypeln +``` +## Basic Usage +With Pypeln you can easily create multi-stage data pipelines using 3 type of workers: +### Processes +You can create a pipeline based on [multiprocessing.Process](https://docs.python.org/3.4/library/multiprocessing.html#multiprocessing.Process) workers by using the `process` module: +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + time.sleep(random()) # <= some slow computation + return x + 1 +def slow_gt3(x): + time.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.process.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.process.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +At each stage the you can specify the numbers of `workers`. The `maxsize` parameter limits the maximum amount of elements that the stage can hold simultaneously. +### Threads +You can create a pipeline based on [threading.Thread](https://docs.python.org/3/library/threading.html#threading.Thread) workers by using the `thread` module: +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + time.sleep(random()) # <= some slow computation + return x + 1 +def slow_gt3(x): + time.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.thread.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.thread.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +Here we have the exact same situation as in the previous case except that the worker are Threads. +### Tasks +You can create a pipeline based on [asyncio.Task](https://docs.python.org/3.4/library/asyncio-task.html#asyncio.Task) workers by using the `task` module: +```python +import pypeln as pl +import asyncio +from random import random +async def slow_add1(x): + await asyncio.sleep(random()) # <= some slow computation + return x + 1 +async def slow_gt3(x): + await asyncio.sleep(random()) # <= some slow computation + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.task.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.task.filter(slow_gt3, stage, workers=2) +data = list(stage) # e.g. [5, 6, 9, 4, 8, 10, 7] +``` +Conceptually similar but everything is running in a single thread and Task workers are created dynamically. If the code is running inside an async task can use `await` on the stage instead to avoid blocking: +```python +import pypeln as pl +import asyncio +from random import random +async def slow_add1(x): + await asyncio.sleep(random()) # <= some slow computation + return x + 1 +async def slow_gt3(x): + await asyncio.sleep(random()) # <= some slow computation + return x > 3 +def main(): + data = range(10) # [0, 1, 2, ..., 9] + stage = pl.task.map(slow_add1, data, workers=3, maxsize=4) + stage = pl.task.filter(slow_gt3, stage, workers=2) + data = await stage # e.g. [5, 6, 9, 4, 8, 10, 7] +asyncio.run(main()) +``` +### Sync +The `sync` module implements all operations using synchronous generators. This module is useful for debugging or when you don't need to perform heavy CPU or IO tasks but still want to retain element order information that certain functions like `pl.*.ordered` rely on. +```python +import pypeln as pl +import time +from random import random +def slow_add1(x): + return x + 1 +def slow_gt3(x): + return x > 3 +data = range(10) # [0, 1, 2, ..., 9] +stage = pl.sync.map(slow_add1, data, workers=3, maxsize=4) +stage = pl.sync.filter(slow_gt3, stage, workers=2) +data = list(stage) # [4, 5, 6, 7, 8, 9, 10] +``` +Common arguments such as `workers` and `maxsize` are accepted by this module's functions for API compatibility purposes but are ignored. +## Mixed Pipelines +You can create pipelines using different worker types such that each type is the best for its given task so you can get the maximum performance out of your code: +```python +data = get_iterable() +data = pl.task.map(f1, data, workers=100) +data = pl.thread.flat_map(f2, data, workers=10) +data = filter(f3, data) +data = pl.process.map(f4, data, workers=5, maxsize=200) +``` +Notice that here we even used a regular python `filter`, since stages are iterables Pypeln integrates smoothly with any python code, just be aware of how each stage behaves. +## Pipe Operator +In the spirit of being a true pipeline library, Pypeln also lets you create your pipelines using the pipe `|` operator: +```python +data = ( + range(10) + | pl.process.map(slow_add1, workers=3, maxsize=4) + | pl.process.filter(slow_gt3, workers=2) + | list +) +``` +## Run Tests +A sample script is provided to run the tests in a container (either Docker or Podman is supported), to run tests: +```bash +$ bash scripts/run-tests.sh +``` +This script can also receive a python version to check test against, i.e +```bash +$ bash scripts/run-tests.sh 3.7 +``` +## Related Stuff +* [Making an Unlimited Number of Requests with Python aiohttp + pypeln](https://medium.com/@cgarciae/making-an-infinite-number-of-requests-with-python-aiohttp-pypeln-3a552b97dc95) +* [Process Pools](https://docs.python.org/3.4/library/multiprocessing.html?highlight=process#module-multiprocessing.pool) +* [Making 100 million requests with Python aiohttp](https://www.artificialworlds.net/blog/2017/06/12/making-100-million-requests-with-python-aiohttp/) +* [Python multiprocessing Queue memory management](https://stackoverflow.com/questions/52286527/python-multiprocessing-queue-memory-management/52286686#52286686) +* [joblib](https://joblib.readthedocs.io/en/latest/) +* [mpipe](https://vmlaker.github.io/mpipe/) +## Contributors +* [cgarciae](https://github.com/cgarciae) +* [Davidnet](https://github.com/Davidnet) +## License +MIT + +%prep +%autosetup -n pypeln-0.4.9 + +%build +%py3_build + +%install +%py3_install +install -d -m755 %{buildroot}/%{_pkgdocdir} +if [ -d doc ]; then cp -arf doc %{buildroot}/%{_pkgdocdir}; fi +if [ -d docs ]; then cp -arf docs %{buildroot}/%{_pkgdocdir}; fi +if [ -d example ]; then cp -arf example %{buildroot}/%{_pkgdocdir}; fi +if [ -d examples ]; then cp -arf examples %{buildroot}/%{_pkgdocdir}; fi +pushd %{buildroot} +if [ -d usr/lib ]; then + find usr/lib -type f -printf "/%h/%f\n" >> filelist.lst +fi +if [ -d usr/lib64 ]; then + find usr/lib64 -type f -printf "/%h/%f\n" >> filelist.lst +fi +if [ -d usr/bin ]; then + find usr/bin -type f -printf "/%h/%f\n" >> filelist.lst +fi +if [ -d usr/sbin ]; then + find usr/sbin -type f -printf "/%h/%f\n" >> filelist.lst +fi +touch doclist.lst +if [ -d usr/share/man ]; then + find usr/share/man -type f -printf "/%h/%f.gz\n" >> doclist.lst +fi +popd +mv %{buildroot}/filelist.lst . +mv %{buildroot}/doclist.lst . + +%files -n python3-pypeln -f filelist.lst +%dir %{python3_sitelib}/* + +%files help -f doclist.lst +%{_docdir}/* + +%changelog +* Tue Apr 11 2023 Python_Bot <Python_Bot@openeuler.org> - 0.4.9-1 +- Package Spec generated @@ -0,0 +1 @@ +bd260015fdbffe239333c072eb26afe5 pypeln-0.4.9.tar.gz |